Delivery

Contents:

- Quickstart

- 1. Create repository

- 2. Create pipeline

- 1. Create code for each table

- 4. Create parent.py and run it

- 5. Write readme.md

- 1. Create

.gitattributesfile - 7. Submit Pull Request

- 8. Deploy to DEV

- 9. Schedule opcon in DEV

- 10. Run in OPCON DEV

- 11. Repeat 8, 9 and 10 for TST, ACC and PRD

- 12. Create AAD groups

- 13. Bind AAD group to SQL role in Synapse serverless

- 14. Set ACL for AAD Group

- 15. Inform customer

- Requirements

- RQ-DL-REPO-001 - Repository naming convention

- RQ-DL-REPO-002 - All delivery repositories contain a ‘parent.py’ using the delivery_run cell magic

- RQ-DL-REPO-003 - All repositories should contain

.gitattributes - RQ-DL-REPO-004 - Each notebook uses a YAML specification

- RQ-DL-REPO-005 - Helper functions should be imported from

adp.delivery.gold - RQ-DL-REPO-006 - Checkpoint management

- RQ-DL-REPO-006 - Each repo should have a readme.md file

- RQ-DL-REPO-007 - Notebooks should not use the .ipynb extension

- RQ-DL-GOLD-001 - Fact tables always start with ‘FCT_’

- RQ-DL-GOLD-002 - Dimension tables always start with ‘DIM_’

- RQ-DL-OPCON-001 - OPCON Job naming convention

- RQ-DL-AAD-001 - AAD Group naming convention

- YAML Reference

- Rollback

Welcome to the documentation website of the delivery part of the Smart Data Platform. The delivery is the second pillar of the platform after the ingest layer. In the delivery pillar data from the ingest platform is delivered to customers of those data products. Data products built in the delivery layer fall primarily under one of these categories:

GOLD: datamarts in the form of star schemas. Used by analytics tools like PowerBI.

EXPORT: exports of data from the bronze/silver layer - possibly with some minor adjustments. These are primarily used by the users of the data in Excel workbooks or in custom SQL stored procedures.

Data products are build using databricks notebooks and are under version control in the repositories of the AppSmartDataPlatform project.

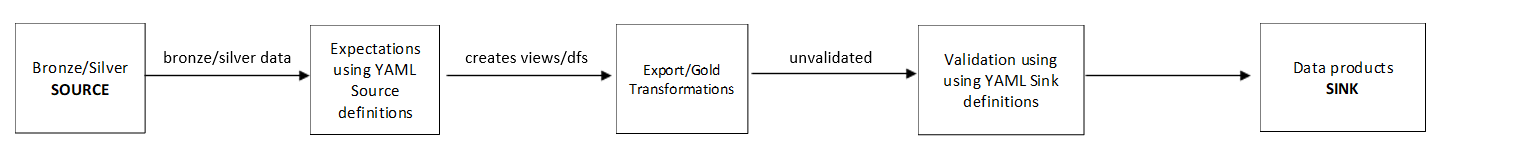

The top of each notebook should start with a YAML specification. This specification lists all the inputs (sources) for that specific notebook. The final cell of the notebook should specify the outputs (sinks). To both sources and sinks, expectations, validations and extra metadata can be added. These are used to verify the data at runtime. The YAML specification are exported at runtime to the /system/.metadata folder on the ADLS. Sources and sinks are specified in the following form: layer.system.entity. The platform will automatically retrieve the data from the storage account, execute checks on the data, and register a temporary view. This temporary view can be used in your transformations (see the Quickstart). When you’re done with transforming the data, you register a temporary view with the same name as your sink.

Then, the delivery platform automatically picks up the views, does checks and tests, and writes the view to the storage account, Databricks database and Synapse views.

Your projects may consist of various notebooks (a notebook for each table for example). A special file named parent.py will specify the order of execution for each notebook. Running this parent notebook will also generate a specific run_id. All child notebooks will export their metadata as JSON with this same run_id at runtime. This allows you to gather all the metadata information for that specific run.

Visit the quickstart to start building projects on the delivery platform immediately. Please also refer to the requirements to check whether your code and way-of-working follows the requirements before submitting a pull request.

A note on ANSI mode

Using the delivery package will automatically enable Spark ANSI mode. This mode makes spark behave in a more strict way. For example, when it cannot cast a variable it will give an error. When Spark is not running in ANSI mode, the failing cast will return a None record. Please visit the Spark documentation for all the ins-and-outs of ANSI mode.